|

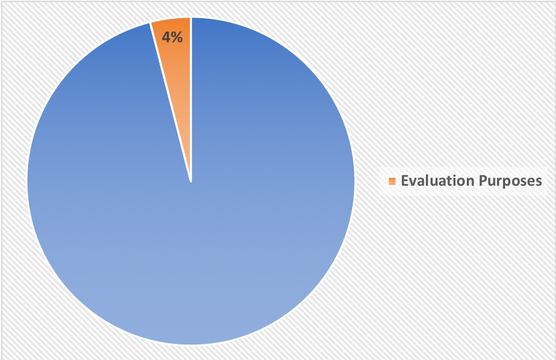

Program Evaluation: the process by which investigators determine how well a program is meeting desired outcomes. Evaluation is relevant to all of us, whether you work in public, private, or nonprofit sectors. Examples of programs we might want to evaluate include big federal initiatives like Head Start (early childhood education) or Scared Straight (juvenile behavior intervention), or locally-run programs, like Vancouver’s Insite (supervised drug injection site) or Day Shelters for the homeless near my home in Lane County, Oregon. Evaluators look into these programs, large and small, and ask questions like: “To what extent is this program doing its job?” or “How can we quantify this program’s impact?” These evaluations can be conducted in-house (i.e., by the office who originally sponsored or runs the program), by hiring third-party evaluation teams, and/or through some combination of stakeholders working collaboratively on the project. The results of an evaluation might provide evidence of a program’s success; it could also reveal failures, unintended consequences, or goals unmet. Hand-in-hand with many evaluation projects are the follow-up questions: “What could be done to improve this program?” “What adjustments are feasible and worth making?” and in some cases, “Should the program continue at all?” From the researcher’s point of view, key themes in this process include datafication, clever measurement, combining multiple levels of analysis (qualitative, quantitative), and the careful consideration of cost-benefit tradeoffs. For a Small Price, Measurement Provides Insight Of the billions of dollars poured into government programs every year, only a very small percentage is allocated to understanding how programs work (process evaluations) or how well they work (impact evaluations). Several decades ago, evaluations rarely existed, and when they did, efforts tended to be underfunded. The number Government Accountability Office (GAO) reports calling for improved evaluations over the past several years unmistakable (GAO-01-822; GAO-06-632T; GAO-10-30; GAO-12-123; GAO-13-570; GAO-14-134; GAO-14-93). Modest recommendations from the GAO suggest 3-5% of a program’s total budget should be used for evaluative purposes (GAO-15-684). These practices are still not commonplace. For public programs to improve over time, and in order for program administrators to make valid claims about value-added or program effectiveness, the evaluation process must be taken seriously. Beyond "Conventional Wisdom" Without evaluation, onlookers have no systematic data that can reliably speak to the efficacy of a program. Lay observation, anecdotal evidence, or even “conventional wisdom” simply do not house the level of rigor required to make empirical claims about a program’s effectiveness. Take for example the case of Scared Straight—a large-scale government effort designed to prevent at-risk youth from a life of crime by exposing them to hardened inmates who had been locked up for serious offenses. While the idea might have some intuitive appeal (and clearly, it did to the policymakers who created it), evaluations of the program reveal this kind of intervention doesn’t help teens behave better—it actually heightens criminal activity. "Seven well-controlled studies that randomly assigned at-risk teens to participate in a scared straight program or a control group found that the kids who took part were, on average, 13% more likely to commit crimes in the following months” ... The clear benefit of evaluations, then, is that they provide a systematic process by which outcomes can be measured and findings communicated back to stakeholders (the public, program managers, potential sponsors). This information can be used not only to understand how well programs have achieved their objectives to-date, but also for the purposes of designing and improving the quality of future programs. In my experience, many college students aren’t introduced to program evaluation during their time at university. When I cover the topic in my Applied Psychology course, students react with a mix of interest and surprise. “Why didn’t I know about this earlier?”... “Why haven’t we been doing this all along?” The skill sets suitable for an evaluation career are notably similar to the ones you might gain through typical social science courses: survey methods, (quasi)-experimental design, descriptive and inferential statistics in R/Stata/SPSS. Encouraging our young people to think about the values and skills applicable to this sort of career path won’t just give them a new place to look for jobs, it can also help the ongoing push toward creating stronger, more effective, more evidence-based policies and programs—a goal shared by anyone who has ever wished our government would “work better.” Doesn't that sound nice? Resources and References Graduate Degree Options in Program Evaluation: https://www.eval.org/p/cm/ld/fid=43 More on Scared Straight: https://www.pewtrusts.org/en/research-and-analysis/blogs/stateline/2015/10/14/evidence-trumps-anecdote-for-scared-straight-programs Andy Feldman's Gov Innovator Podcast, Topic Sort "Evaluation / Measuring Impact" : https://govinnovator.com/topic/#evaluation Fox, C. R. & Sitkin, S. B. (2015) “Bridging the divide between behavioral science & policy” Behavioral Science and Policy, 1(1), p.16-30 Petrosino, A., Turpin-Petrosino, C., & Finckenauer, J. O. (2000). Well-meaning programs can have harmful effects! Lessons from experiments of programs such as scared straight. Crime & Delinquency, 46, 354–379. http://dx.doi.org/10.1177/0011128700046003006 “In the end, however, Campbell’s experimental approach to evaluation became just one small part of the field. Critics challenged his Experimenting Society, particularly the notion that experimental methods were preferred for evaluation. They argued that experimental methods were insufficient to address social problems in a world where policy practice is entangled with politics, economy, and social pressures; questioned the importance of noncausal ques- tions and nonexperimental methods; complained that experimentally based knowledge was not fully implemented in solving social problems; and pointed out limitations of experimental methods. Eventually, the field of evaluation rejected Campbell’s Experimenting Society as too narrow and Utopian, pre- ferring a broader vision of the role of evaluation. Even so, because bias remains a central problem for evaluation, the solutions Campbell offered will be his greatest legacy.”

1 Comment

|

Archives

September 2023

Categories

All

|

RSS Feed

RSS Feed